- #UNITY 2019 FIXED UPDATE TOO FAST HOW TO#

- #UNITY 2019 FIXED UPDATE TOO FAST UPDATE#

- #UNITY 2019 FIXED UPDATE TOO FAST CODE#

- #UNITY 2019 FIXED UPDATE TOO FAST PC#

#UNITY 2019 FIXED UPDATE TOO FAST UPDATE#

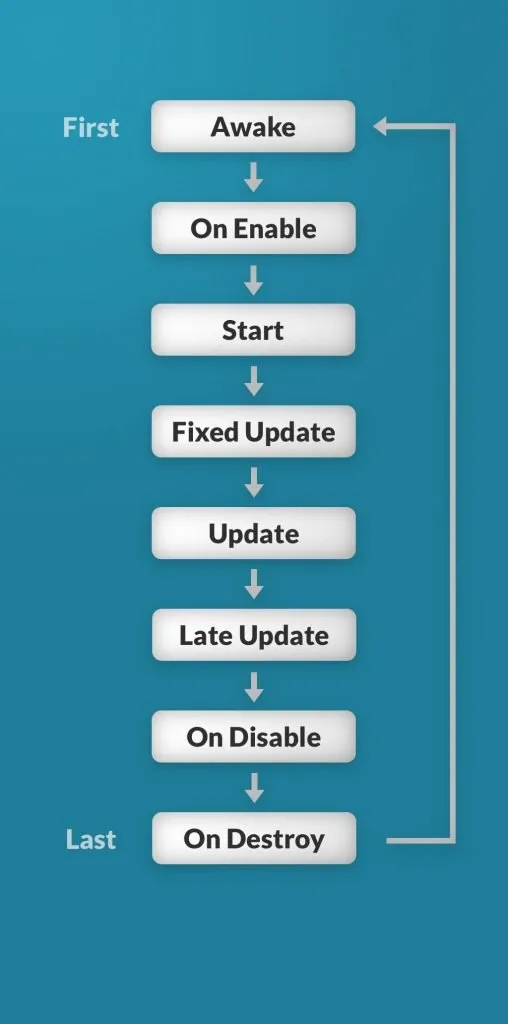

That should work with it as currently thanks for the update too. Otherwise it seems there is no major concern for the external video track feature. Again, see #83 and especially this comment, although as pointed this would require some work from us if at all feasible. For step 6., have you looked into video multiplexing? It seems this is the way forward to send metadata associated with a video frame and ensure synchronization.I am worried about step 1., as other users have reported trying to use data channels for high frequency data (sending the camera position each frame see #83) was not working well, most likely due to the buffering that the data channels do at the SRTP protocol level.

#UNITY 2019 FIXED UPDATE TOO FAST HOW TO#

Let me know if you need any further clarification or if you have any ideas on how to improve the data thanks for the details. We are using a raw tcp socket connection to send each frame to the client along with the corresponding render request id. Each frame is compressed to a jpeg imageĦ. Note that the client needs to know the render request id for each received frame in the video stream.Īt the moment, we are using a temporary workaround for the following steps:ĥ. The client receives the video stream and displays it as a quad texture.The video is streamed to from the render server to the client using WebRTC.The merged bitmap is fed into the video stream along with the render request ID.The horizontal resolution of the merged bitmap is thus twice the resolution of each individual bitmap The two bitmaps are merged side- by side to one single bitmap.The render server renders left and right eye to two separate RGBA bitmaps.The render server may hence receive multiple render requests for each actual rendered frame, where only the newest request is actually rendered. Any render request received before the newest request is discarded. The render server configures the VTK view frustum according to the newest render request.

The application is using VTK for rendering.

#UNITY 2019 FIXED UPDATE TOO FAST PC#

Render server: a desktop PC application running on a PC with a powerful graphics card. As the HoloLens is not powerful enough for this type of rendering, we are doing remote rendering on a PC.Ĭlient: the Unity app running on the HoloLens We are doing raycast volume rendering for a HoloLens 2. If you want to give it a try however and submit a PR for that then we can talk about how to proceed.Īs requested by on the mixedreality-webrtc Slack channel, I'll describe our use case here:

#UNITY 2019 FIXED UPDATE TOO FAST CODE#

I appreciate these are not great answers, and there is no way currently to do any of that without modifying the code of MixedReality-WebRTC and/or the code of the WebRTC UWP project.

If you want synchronized RGB-D frames however from both the RGB and Depth sensors then these need to be captured via some specialized API like MediaFrameSourceGroup on UWP. For separate RGB or Depth, I think this should work, though this is probably not very interesting. However I saw little demand for it so far, and I currently have other high-demand features in the work, so I wouldn't expect it in the short term.įor the Azure Kinect-DK, I unfortunately didn't get a chance yet to try it with this project. I would like to add that feature eventually, as well as allow the application to manipulate the camera itself. Hi the custom video source, I assume you mean being able to send to WebRTC custom frames coming from your app and not directly from the camera, like generated images.